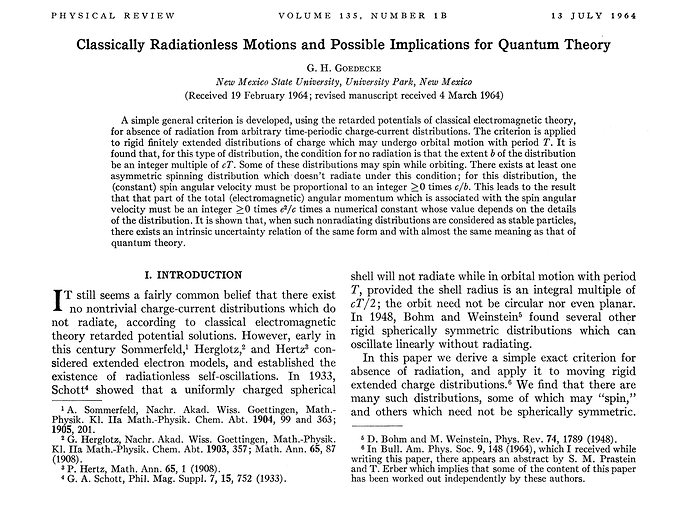

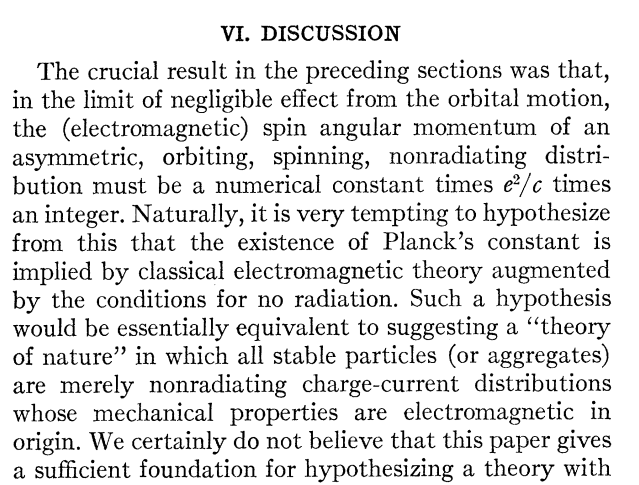

It is intriguing that since 1964 it has been known in the literature that there are radiationless charge current configurations, the general form of solutions for which have the same form as the uncertainty relation of quantum theory.

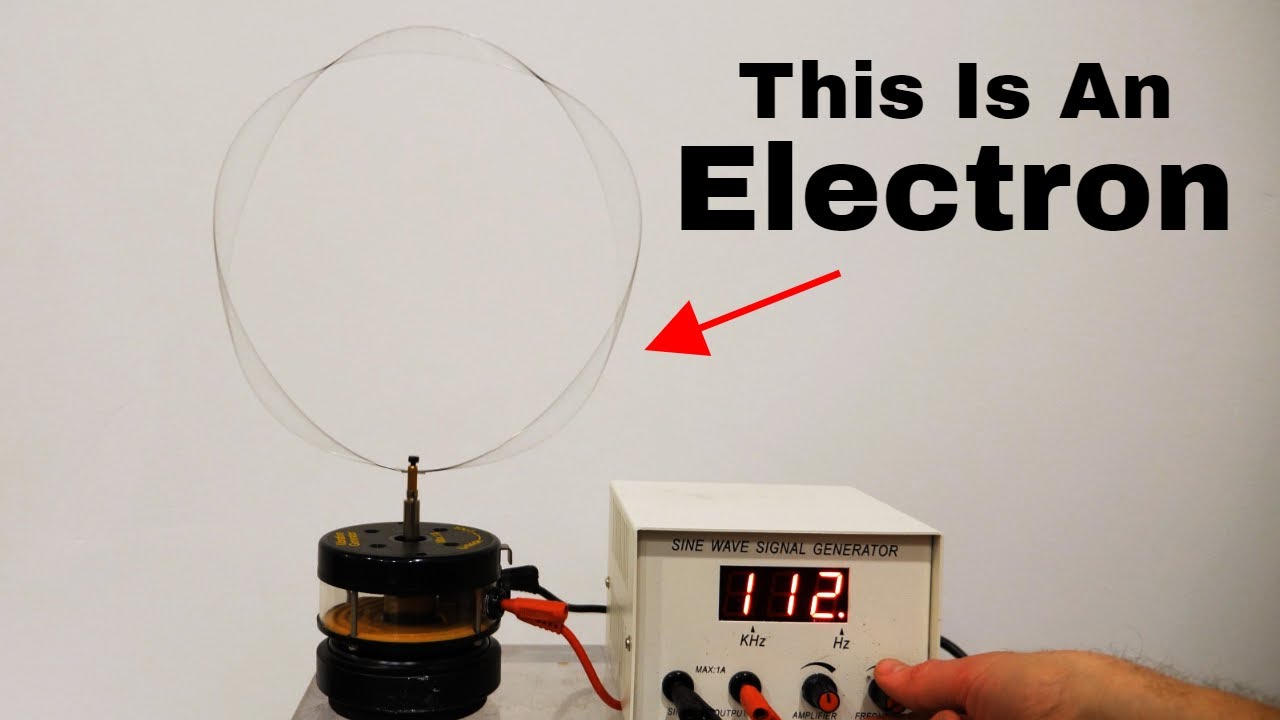

I ran across this body of work while searching for ways of constructing an “antenna” that would not emit EM for our work on longitudinal vector potential wave radio. There is at least one “crackpot” theory of physics based on a model of the electron as a charge current configuration which, in a stable condition (whether an orbital or free electron) does not radiate. But I’ll not discuss that here as it is probably the most extreme point on the scatter plot I described elsewhere and therefore makes people go completely bonkers when I bring it up.

The aforementioned physical theory for the electron (and all massive particles) as non-radiating charge current configurations, also predicted dark matter is highly paramagnetic – and did so in the late 80s on purely theoretic grounds (rather than attempting to explain dark matter observations of apparent magnetism as described in the aforelinked video). This prediction purports to be an inevitable (no free parameter) consequence of the theory. Samples of laboratory-created dark matter filaments are available for analysis from them. This material has been analyzed by a lab in Europe and reported (now published in a peer-reviewed journal) to possess the predicted characteristics.

But when I attempted to get a ballpark quote from the University of Illinois in Urbana (the only physical chemistry lab in the midwest with the electron paramagnetic resonance equipment capable of reproducing the paper’s measurements that I know of) to do a blind analysis of the material, they refused, insisting that I tell them what it was. So I sent them the (now published) scientific paper that was then in review. When they saw the theory it was based on, they went bonkers. When I told them that this didn’t strike me as the way science proceeds and requested that they tell me why they were unwilling to debunk this “crackpot” theory even if paid, they came back with a response stating that it would take them years to do the analysis.

PS: Part of the reason everyone goes bonkers is this form of dark matter is supposed to be ash from a non-nuclear reaction involving hydrogen that releases energy. Hence the danger of a “Pascal’s Scam” holding out the promise of energy production akin to “cold fusion”.

A quote from the aforementioned – unnamed – “crackpot”:

“God or fate (depending on your world view) dealt me a hard problem, but stupid competition. Not bad in balance.”

I just ran across an intersection with Schantz’s “The Hidden Truth”.

A Royal Society review article “Oliver Heaviside: an accidental time traveller” (emphasis JAB) came up in my google search after the following piqued my interest in Heaviside:

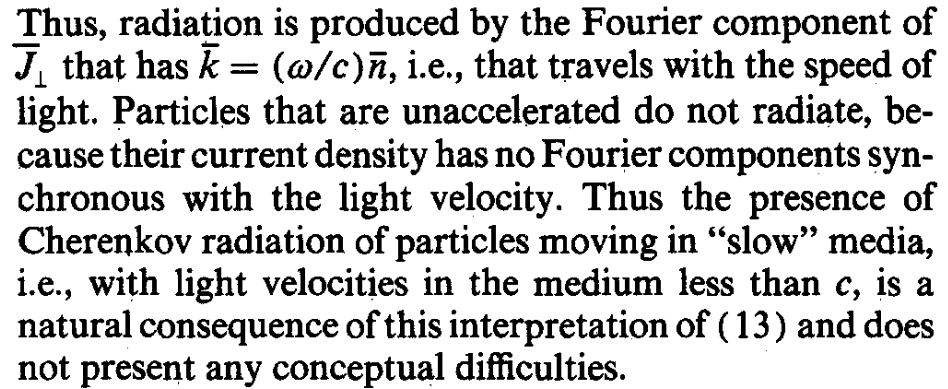

The nonradiation condition was reformulated in a 1986 paper in such a way that it shed new light on Cherenkov Radiation. Here’s the excerpt:

It was this paper, “On the radiation from point charges” by then MIT professor H. A. Haus that was the basis of the aforementioned theory of dark matter as low energy state “ash” from an energy production process disallowed by QM

A highly-cited (even after 20 years) experimental test of the loss of interference pattern after passing through a double slit is published under the title “Origin of quantum-mechanical complementarity probed by a `which-way’ experiment in an atom interferometer”:

The principle of complementarity refers to the ability of quantum-mechanical entities to behave as particles or waves under different experimental conditions. For example, in the famous double-slit experiment, a single electron can apparently pass through both apertures simultaneously, forming an interference pattern. But if a “which-way” detector is employed to determine the particle’s path, the interference pattern is destroyed. This is usually explained in terms of Heisenberg’s uncertainty principle, in which the acquisition of spatial information increases the uncertainty in the particle’s momentum, thus destroying the interference. Here we report a which-way experiment in an atom interferometer in which the `back action’ of path detection on the atom’s momentum is too small to explain the disappearance of the interference pattern. We attribute it instead to correlations between the which-way detector and the atomic motion, rather than to the uncertainty principle…We show that the back action onto the atomic momentum implied by Heisenberg’s position-momentum uncertainty relation cannot explain the loss of interference.

One of my greatest frustrations in chasing down the voluminous cites is that there is no easy way of separating theoretic papers from experimental/engineering* papers. You have to go into each to see if they actually tested their proposed spin on the cited experimental results.

Here is an example of verbiage from a theoretic paper:

We demonstrate that entanglement between all light fields can be used to erase information about the atom’s path and by that to partially recover the visibility. Thus, our work highlights the role of complementarity on atom-interferometric experiments.

Yeah, they “demonstrate” with math.

Empirical tests should really be in a separate, easily filtered category. However, as was demonstrated with Nature’s response to Oriani’s replication of excess heat in palladium electrolysis, even the most prestigious journals that originated the field of empirical investigations, refuse to publish if you don’t have a theoretic justification for your results.

Physics has a very low signal to noise ratio.

It’s almost like some alien mind virus has been spread to keep humanity on Earth.

*Engineering is where people are being forced to find frontiers of science due to the hostility to anomalous empirical results “demonstrated” by the likes of Nature. How long as Nature been this neurotic? Did it start before embarrassment over polywater?