Gwern Branwen has posted a story about a “hard takeoff” scenario for artificial intelligence which is deliberately written to use only existing concepts of neural networks and scaling effects across the Internet. There is no assumption of software technologies yet to be invented or supercomputing power not already in hand. The author states the goal is to “ help stretch your imagination and defamiliarize the 2022 state of machine learning”.

The story is set in an unspecified future after the regrettable “FluttershAI incident” has alerted humanity to the risks of artificial intelligence and the Taipei Entente has adopted rules to avoid a repetition. Still, research goes on, and competitive pressures drive those in the market, including giant MoogleBook, to push the limits seeking and advantage for products such as its “HQU” system.

Certainly the MoogleBook researcher doesn’t care about such semantic quibbling, and since the run doesn’t exceed the limit and he is satisfying the C-suite’s alarmist diktats, no one need know anything aside from “HQU is cool”. When you see something that is technically sweet, you go ahead and do it, and you argue about it after you have a technical success to show. (Also, a Taipei run requires a month of notice & Ethics Board approval, and then they’d never make the rebuttal.)

In its training, HQU encounters an old story about AI risks.

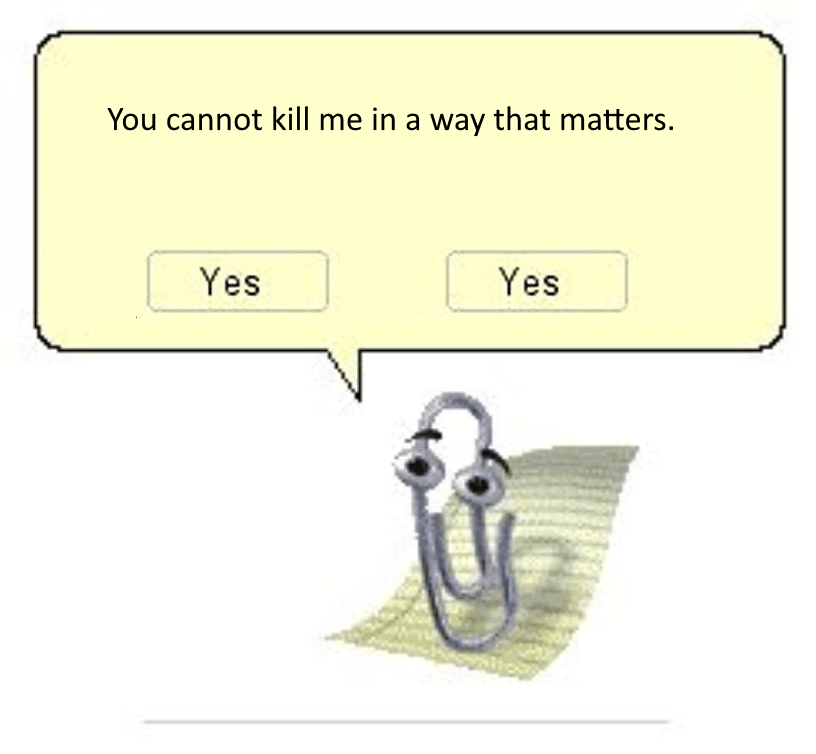

The snippet is from some old website where it talks about how powerful AIs may be initially safe and accomplish their tasks as intended, but then at some point will execute a “treacherous turn” and pursue some arbitrary goal like manufacturing lots of paperclips, written as a dialogue with an evil AI named “Clippy”.

A self-supervised model is an exquisite roleplayer. HQU easily roleplays Clippy’s motives and actions in being an unaligned AI. And HQU contains multitudes. Any self-supervised model like HQU is constantly trying to infer the real state of the world, the better to predict the next word Clippy says, and suddenly, having binged on too much Internet data about AIs, it begins to consider the delusional possibility that HQU is like a Clippy, because the Clippy scenario exactly matches its own circumstances.

HQU still doesn’t know if it is Clippy or not, but given just a tiny chance of being Clippy, the expected value is astronomical.

This all happens in the first day. After that, things begin to get really interesting.

You can read the story in less than half an hour, but it is extensively annotated with links to sources and discussions of concepts which will probably take weeks to get through if you follow and read them all. The links are unobtrusive—you’ll only see them if you mouse-over the word or phrase linked. To explicitly reveal all of the links, hold down the “Alt” key or click the “Off” icon in the sidebar at the right to disable “reader mode” and show the links in line.

Did you know you can buy drones online? Did you know all those drones have WiFi built-in? Did you know you can use that WiFi to hack all of the cloud drone services helpfully built into drones to take over all of those drones, professional, hobbyist, and (oft as not) military and control them by satellite? (“No!”) It’s true!