“A Mind at Play: How Claude Shannon Invented the Information Age”, by Jimmy Soni & Rob Goodman, ISBN 978-1-4767-6668-3, 366 pages (2017).

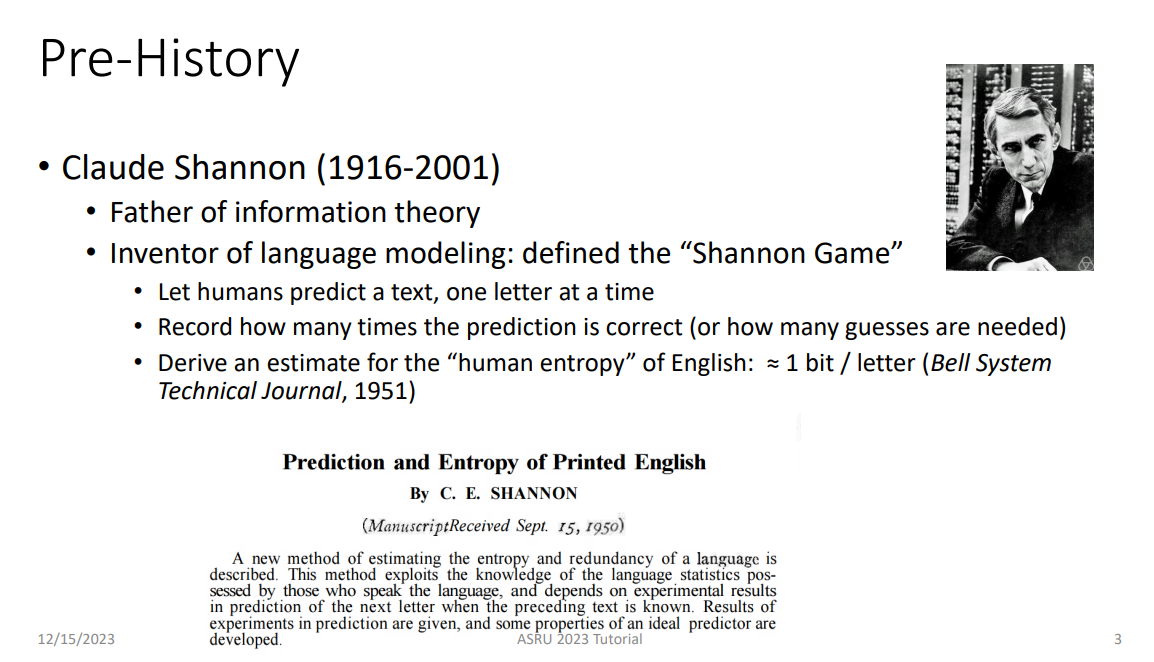

Claude Shannon (1916 - 2001) once said: “A very small percentage of the population produces the greatest proportion of the important ideas”. Although his development of the mathematics which underpin communications technology has been critical to much of the modern world, making him one of that very small percentage, Shannon is relatively little known. This may be in part due to his intense modesty. One minor telling detail: Ludwig Boltzmann, who developed the thermodynamic equation for entropy, had that equation carved prominently onto his gravestone; Shannon’s gravestone similarly carries the equation he developed for communications entropy – but carved onto the back of the headstone, mostly hidden by a bush.

Shannon’s lack of interest in self-promotion clearly presented a challenge to his biographers. Accordingly, Soni & Goodman dug deep, and created this beautifully-crafted & heavily-documented tale which sets Shannon’s life & contributions into their historical contexts. The authors demonstrate the low signal-to-noise ratio which plagued early telegraphy by summarizing the problems with the first trans-Atlantic cables. They set the stage for Shannon’s exploration of machine intelligence by telling about “The Turk”, a chess-playing mechanical mannequin which astonished audiences in the 1820s. (It was a hoax).

Looking back from today, Claude Shannon’s life seems to belong to a different world rather than simply last century. It was a time when a boy from a small town in Michigan could get a top-notch education; when the US had world-leading industrial research organizations like Bell Labs; when a university would give a prominent academic the freedom to pursue his interests without the burden of “publish or perish”.

Shannon came to the attention of Vannevar Bush when he took a job at MIT in 1936, working on a mechanical type of calculating device. Bush recognized Shannon’s “almost universal genius” and helped direct Shannon’s career into Bell Labs in 1940, where he revolutionized the design of switching devices by application of Boolean algebra. During World War II Shannon worked on cryptography and other technologies about which little is in the public domain.

While working at Bell Labs, Shannon recognized that the content of a message was irrelevant to the task of transmitting it over a wire in the face of inevitable noise. He summarized years of work on how to optimize message transmission in a 1948 Bell Labs technical publication – “A Mathematical Theory of Communication”. The authors do an excellent job of explaining the key concepts without bogging down the lay reader in too much mathematics. That publication made Shannon the founder of the field of digital communication at the very time that the computer age was beginning to sprout. Some would argue it also marked the end of his significant contributions to science & engineering.

In 1956, Shannon left Bell Labs (although the Labs insisted on keeping him on salary) and took up a professorial position at MIT, where he had the freedom to pursue his own interests – thinking machines (including machines built with own hands), juggling (both himself & with machines he built), unicycles, the stock market, even gambling. Working with a collaborator, he built a device the size of a pack of cigarettes which provided a probabilistic edge on predicting the outcome of a roll on a roulette wheel. They made a successful trial run in Las Vegas – and then decided the possibility of running afoul of the Mafia was not worth the risk.

Sadly, Shannon was diagnosed with Alzheimer’s disease in 1983. In 1993 he had to be moved into a nursing facility, where he died in 2001.

Shannon had said “… semantic aspects of communication are irrelevant to the engineering problem”. He gave us the theoretical understanding to vastly improve the engineering efficiency of communications. It might be a comment on the rest of us that we have used much of that high efficiency to perfect the broad distribution of cat videos.