My niece recently graduated from BYU with a CS degree but is getting discouraged about ever finding the kind of life she wants:

A husband with whom she can raise a big family while working from home as a programmer. (You’d think this would be no problem with the LDS… Don’t get me started…)

So I’ve been trying to come up with some way to overcome her depression and think maybe I’ve found something that will motivate her out of her funk:

She’s seriously into BattleBots (as a fan although she did some class projects involving bot control) and has written a whole bunch of “mods” for the Unity gaming engine…

So…

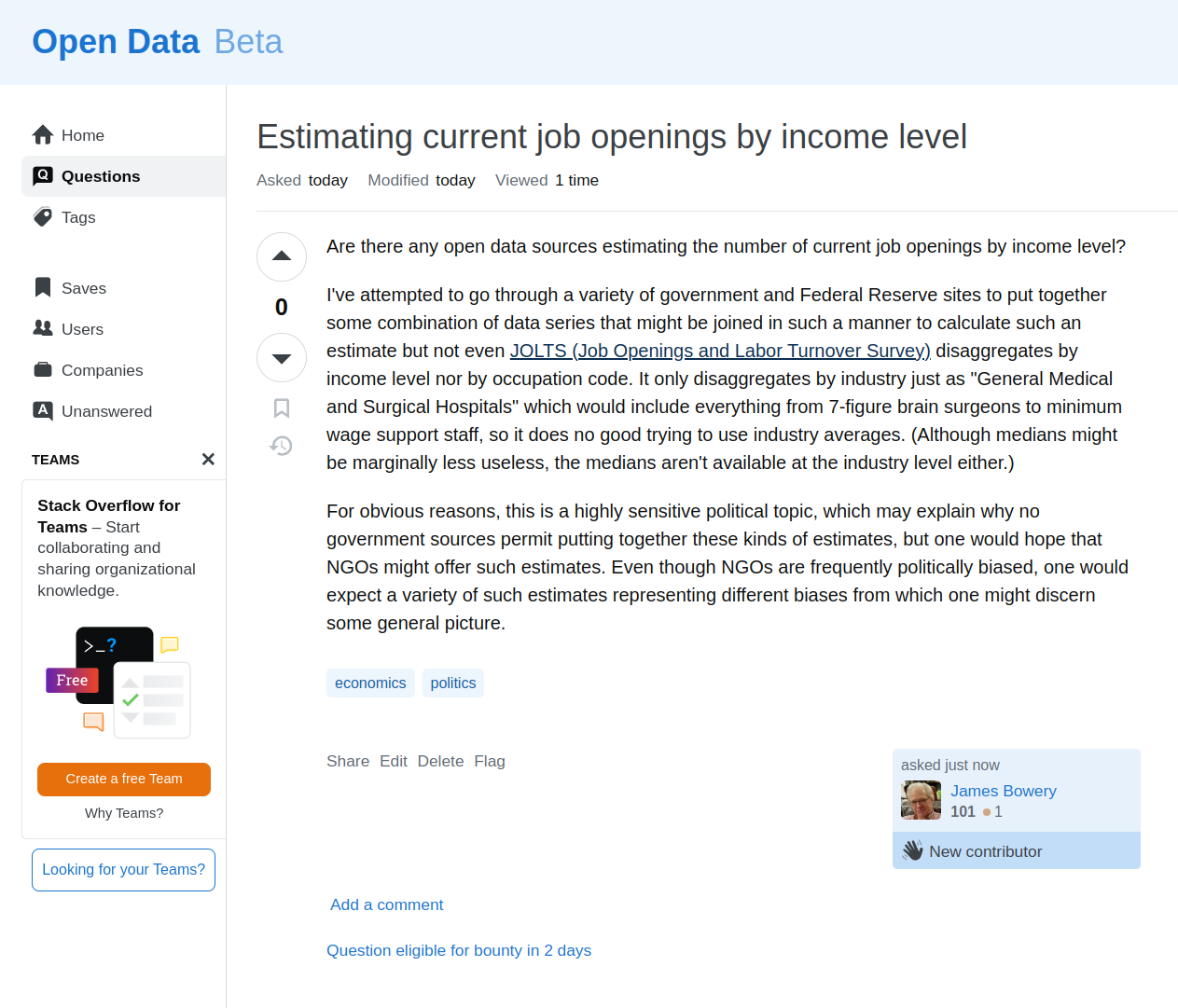

I went looking around for any BattleBots that were doing semi-autonomy using something like an AI Dojo for training of a outside-the-arena camera.

What should I discover but Orbitron:

And they’re from the University of Waterloo!

The University of Waterloo is where the Applied Brain Research guys are from:

But the ABR guys caught my attention back in 2019 because their Legendre Memory Unit patent was focused on dynamical systems identification – and that was what I saw as the key to the future of Algorithmic Information approximation as model selection.

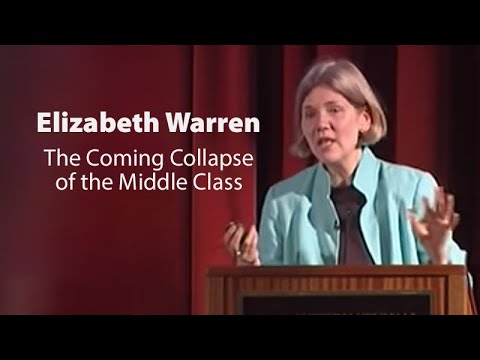

Well, after a few years, the language modeling hysteria calmed down enough for Silicon Valley to recognize dynamical systems as the way forward in language modeling but, of course, the academic “authors of confusion” had to come up with another term for dynamical systems identification: “state space modeling”:

Well before “Mamba” (a State Space Model) became The Next Big Thing, I had already been seeking funding for the ABR guys by flying to Houston to meet with some very wealthy (and as it turned out, unfortunately for me, not very serious about AI) folks. This I did because, as I had hoped they might based on their proximity to Algorithmic Information as model selection, they came up with a factor of 10 improvement in data efficiency for their language model well before Mamba.

But, because they weren’t in SV no one cared.

OK, so I’m just trying to emphasize this because when I hear about “Waterloo” and “AI” I take notice because it is likely being undervalued by the AI hysteria that has taken hold of SV. (For example, Altman’s “$7 Trillion Dollar Chip Initiative” when the ABR guys seemed to have grounded their spiking neural net chip in neocortical systems model, trainable with their LMU approach to dynamical systems identification, but that’s another story.)

So these Waterloo BattleBots kids did pretty much the right thing from an AI perspective:

They used a physics game engine (Unity) to create an AI training Dojo, the way Musk is using his Dojo to train Tesla’s autonomous vehicles.

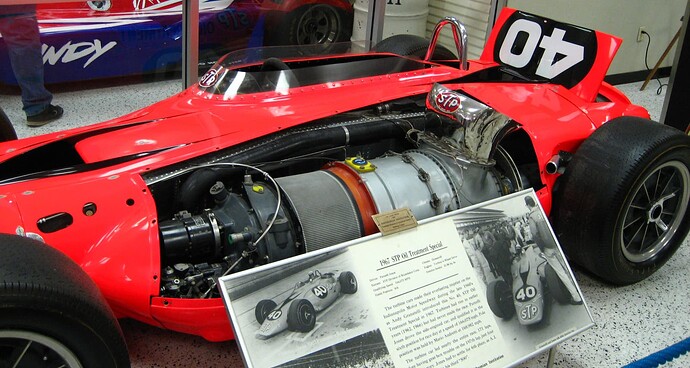

But then, reminiscent of the STP-Paxton Turbocar’s flop at the Indy500:

…they had an amazing start only to be defeated by the complexity of their system…

Obviously at least to me, to win in an arena like BattleBots, you need a mechanical design to withstand serious impacts as much as you need high performance control.

The kind of thoughts that run through my head:

Could you, possibly get rid of what we think of “electronics” entirely by reverting back to Tesla’s “Method of and apparatus for controlling mechanism of moving vessels or vehicles”… and, no, I’m not talking about Musk’s Tesla:

Who have we known better-versed in the history of “technological fallbacks” than John?

And what sort of energy storage is most-resilient to shocks while being able to deliver huge amounts of power, on demand, to rotating weapons as well as wheel torque?

Flywheels? Homopolar motor/generators?

Perhaps even low internal resistance ultracapacitors?

https://ultrafastcap.com/product-line

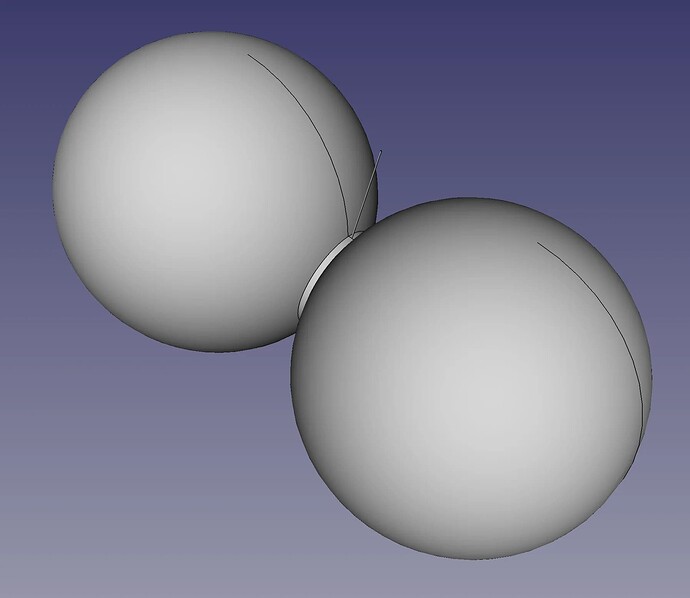

And what about this approach to simplicity of a self-righting BattleBot?

Ignore for a moment the low traction implied by a small surface contact and the lack of a viable weapon, since those can be remedied by a cylindrical section to each of the wheels and spray-on traction material for metal surface, and bisecting the axle with a torque-driven weapon.

And, as for transmitting control signals to such a vehicle:

Boy do I wish I could talk to John!