The components built in previous episodes begin to come together on a large plywood board as the control logic, instruction decoder, clock, and the arithmetic/logic unit are connected together and tested as a single unit with a beefy power supply.

The fact that I don’t understand what is happening here drives home to me that I have probably missed some of the most important developments of my lifetime. In my defense, I will say that at the time I was acquiring my education (college began in 1962), such knowledge was pretty much confined to specialists working in what was a new and rapidly-advancing field. Even as an end user of consumer hardware & software today, this lack of deeper understanding still has frustrating consequences and not infrequently.

Actually, if you go back and watch this series from the beginning (here is a playlist of the entire project):

it provides a very good introduction to the electrical and logic building blocks that make up all computers, using just about the simplest case: a computer with a one-bit data path. Such processors are actually useful: the machine being built here is modeled on the Motorola MC14500B Industrial Control Unit, which was widely used to replace electromechanical relay logic in industrial equipment.

Thanks. I will try to invest the time in the series, since I have ben trying to improve my understanding of computers. As part of my normal exercise in understanding things, I employ reductionism (as well as creating some framework as to the structure/relationship of parts of things). In my imaginings of reducing computing to its simplest act, I think I ought to be able to trace a single bit from input to output, as a test of my understanding. Is this a reasonable approach?

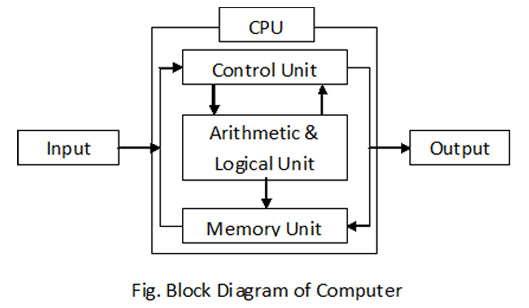

I tend to think of the foundations of computing and computer architecture from two directions: the logic elements from which they are built and the block diagram of the units that make up the computer. The logic elements can be very simple: you can build an entire computer entirely out of a single component: a NOR gate. In fact, the Apollo Guidance Computer, which navigated the Apollo spacecraft to the Moon, was built entirely out of three-input NOR gates—4,100 of them—from which all other logic elements such as AND gates, flip-flops, counters, adders, and shift registers were assembled.

The block diagram can be as simple as this.

Despite everything within the CPU box being built from the same fundamental logic elements, the separate functional units are very different in structure and their interactions with one another. It isn’t so much the data path that matters, but how the Control Unit, executing instructions from the Memory Unit, manipulates data both from the Input and Memory Unit to modify memory and eventually produce Output. This same architecture applies regardless of how wide the data path is through the machine or how complex the operations performed performed by the Arithmetic & Logical Unit: these are refinements which make the computer easier to program and run faster, but they don’t fundamentally change how it works.

Amazingly, this architecture was invented by Charles Babbage in the 19th century and first described in 1837. The 1842 “Sketch of the Analytical Engine” by L. F. Menabrea and annotated by Ada Augusta, Countess of Lovelace, describes this in detail, although in a vocabulary different from modern terminology: here is a Glossary I prepared to accompany my software reconstruction of The Analytical Engine.

Thank you, John. I am very appreciative.