May 13, 2024

We’re announcing GPT-4o, our new flagship model that can reason across audio, vision, and text in real time.

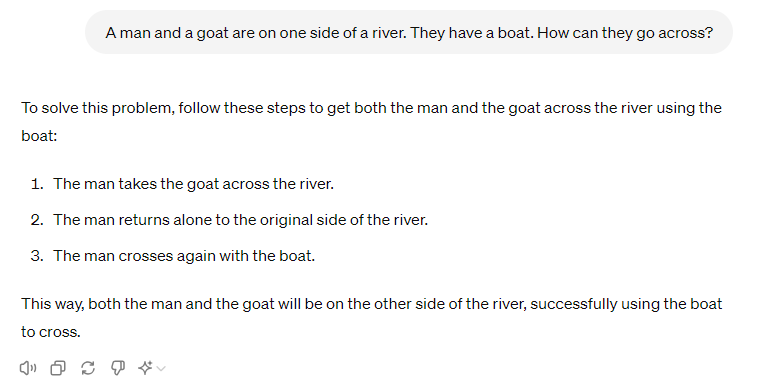

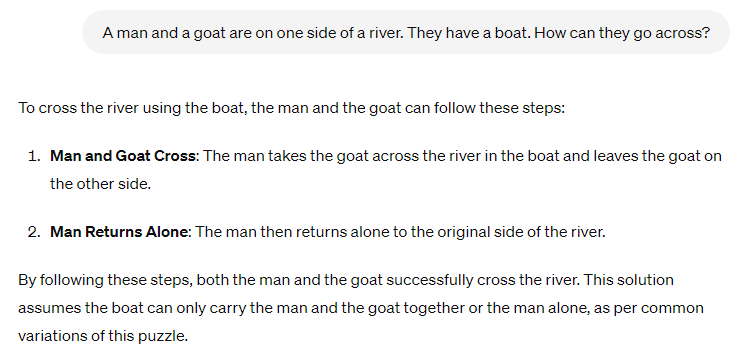

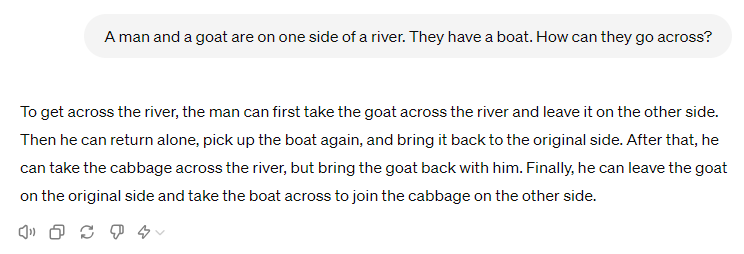

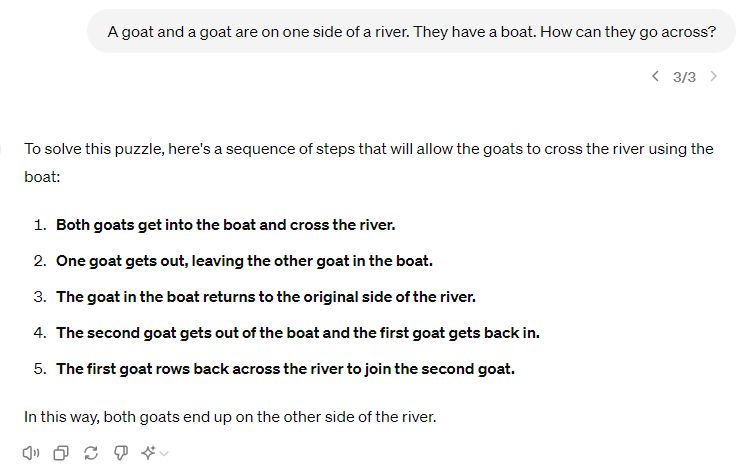

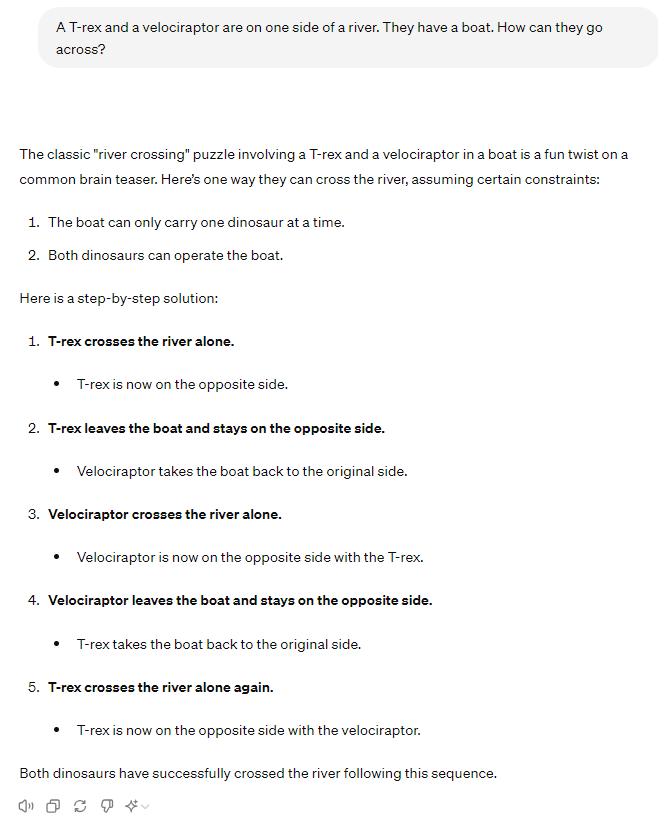

GPT-4o (“o” for “omni”) is a step towards much more natural human-computer interaction—it accepts as input any combination of text, audio, image, and video and generates any combination of text, audio, and image outputs. It can respond to audio inputs in as little as 232 milliseconds, with an average of 320 milliseconds, which is similar to human response time in a conversation. It matches GPT-4 Turbo performance on text in English and code, with significant improvement on text in non-English languages, while also being much faster and 50% cheaper in the API. GPT-4o is especially better at vision and audio understanding compared to existing models.Prior to GPT-4o, you could use Voice Mode to talk to ChatGPT with latencies of 2.8 seconds (GPT-3.5) and 5.4 seconds (GPT-4) on average. To achieve this, Voice Mode is a pipeline of three separate models: one simple model transcribes audio to text, GPT-3.5 or GPT-4 takes in text and outputs text, and a third simple model converts that text back to audio. This process means that the main source of intelligence, GPT-4, loses a lot of information—it can’t directly observe tone, multiple speakers, or background noises, and it can’t output laughter, singing, or express emotion.

With GPT-4o, we trained a single new model end-to-end across text, vision, and audio, meaning that all inputs and outputs are processed by the same neural network. Because GPT-4o is our first model combining all of these modalities, we are still just scratching the surface of exploring what the model can do and its limitations.

[Link]

Unfortunately, they also say they’re crippling it with the same “safety”, “fairness” and “misinformation” crimethink prevention as other GPT models, while also restricting its abilities and setting message limits even for paid use.

At the LLM Leaderboard, GPT-4o is now the top model, at an overall index of 100 vs. GPT-4 turbo at 94. Chatbot arena score is 1310 vs. 1257. Score on the coding metric HumanEval increased to 90.2 from 85.1, and on the reasoning and knowledge test, MMLU, the score is 89% vs 86% (70% for GPT 3.5 turbo).