This is one of the most spectacular things I’ve seen in quite a while. It fuses several artificial intelligence techniques to create or modify images based upon natural language text prompts, including photorealistic rendering of the interaction between modifications to images and the original scene. The technique is described in the paper “GLIDE: Towards Photorealistic Image Generation and Editing with Text-Guided Diffusion Models”. Here is the abstract.

Diffusion models have recently been shown to generate high-quality synthetic images, especially when paired with a guidance technique to trade off diversity for fidelity. We explore diffusion models for the problem of text-conditional image synthesis and compare two different guidance strategies: CLIP guidance and classifier-free guidance. We find that the latter is preferred by human evaluators for both photorealism and caption similarity, and often produces photorealistic samples. Samples from a 3.5 billion parameter text-conditional diffusion model using classifier-free guidance are favored by human evaluators to those from DALL-E, even when the latter uses expensive CLIP reranking. Additionally, we find that our models can be fine-tuned to perform image inpainting, enabling powerful text-driven image editing. We train a smaller model on a filtered dataset and release the code and weights at this https URL.

You have to try this yourself to appreciate how revolutionary this is. You can run it on Google Colab by visiting the GitHub page for project GLIDE, then clicking the “Open in Colab” buttons in the description for the model you wish to try. I produced the following using the clip_guided model. For each, I show the prompt and the image(s) that resulted.

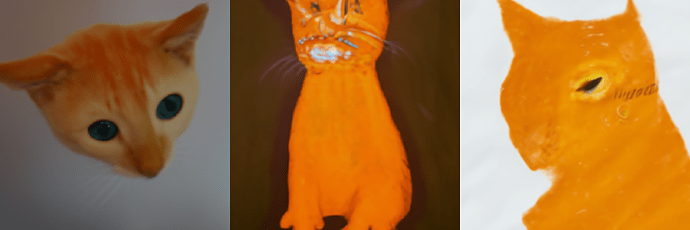

orange cat in the style of Van Gogh

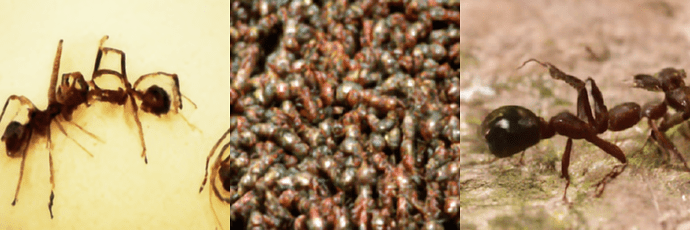

ants from space

cats by Picasso

corgi wearing a party hat

To make your own custom images, load the notebook, then edit the “Sampling parameters” in section 18, setting the prompt to describe the image you seek and batch_size to the number of images to generate (execution time scales linearly with this number), then from the menu at the top, select Runtime/Run all. If you get anything interesting or amusing, please post it in the comments.

This publicly-available demo is based upon a smaller model than that shown in the video, so results from it may not be as stunning.