The World Economic Forum (WEF), organiser of the annual Davos Bond villain conclave and slaver fest in Davos, Switzerland, has just published an “Opinion” piece on 2022-08-10 titled “The solution to online abuse? AI plus human intelligence” by one Inbal Goldberger, who is identified as “VP of Trust & Safety at ActiveFence”. ActiveFence, in turn, is an Israeli company (visit their Web site) which sells “The end-to-end tool stack for agile, scalable, and efficient Trust & Safety teams”.

The WEF prefixes the article with the disclaimer (bold in the original):

Readers: Please be aware that this article has been shared on websites that routinely misrepresent content and spread misinformation. We ask you to note the following:

1) The content of this article is the opinion of the author, not the World Economic Forum.

2) Please read the piece for yourself. The Forum is committed to publishing a wide array of voices and misrepresenting content only diminishes open conversations.

But then, the WEF did, did it not, exercise editorial discretion in deciding to publish this opinion piece instead of, for example, one on the challenges to economic development of sub-Saharan Africa posed by the low mean IQ of its population.

As the internet has evolved, so has the dark world of online harms. Trust and safety teams (the teams typically found within online platforms responsible for removing abusive content and enforcing platform policies) are challenged by an ever-growing list of abuses, such as child abuse, extremism, disinformation, hate speech and fraud; and increasingly advanced actors misusing platforms in unique ways.

The solution, however, is not as simple as hiring another roomful of content moderators or building yet another block list. Without a profound familiarity with different types of abuse, an understanding of hate group verbiage, fluency in terrorist languages and nuanced comprehension of disinformation campaigns, trust and safety teams can only scratch the surface.

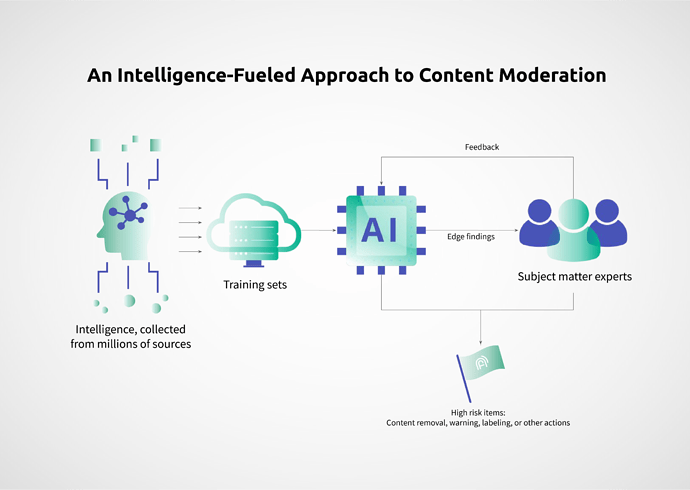

A more sophisticated approach is required. By uniquely combining the power of innovative technology, off-platform intelligence collection and the prowess of subject-matter experts who understand how threat actors operate, scaled detection of online abuse can reach near-perfect precision.

⋮

While AI provides speed and scale and human moderators provide precision, their combined efforts are still not enough to proactively detect harm before it reaches platforms. To achieve proactivity, trust and safety teams must understand that abusive content doesn’t start and stop on their platforms. Before reaching mainstream platforms, threat actors congregate in the darkest corners of the web to define new keywords, share URLs to resources and discuss new dissemination tactics at length. These secret places where terrorists, hate groups, child predators and disinformation agents freely communicate can provide a trove of information for teams seeking to keep their users safe.

And so, AI can allow surveillance, not just of the big content platforms, but of “the darkest corners of the web”, to identify and “stop threats rising online before they reach users.”

Why, they might call it “Total Information Awareness”.